Blog Archives

Not all SSDs are the same

Happy Lunar New Year! The Chinese around world has just ushered in the Year of the Water Dragon yesterday. To all my friends and family, and readers of my blog, I wish you a prosperous and auspicious Chinese New Year!

Over the holidays, I have been keeping up with the progress of Solid State Drives (SSDs). I am sure many of us are mesmerized by SSDs and the storage vendors are touting the best of SSDs have to offer. But let me tell you one thing – you are probably getting the least of what the best SSDs have to offer. You might be puzzled why I say things like this.

Let me share with a common sales pitch. Most (if not all) storage vendors will tout performance (usually IOPS) as the greatest benefits of SSDs. The performance numbers have to be compared to something, and that something is your regular spinning Hard Disk Drives (HDDs). The slowest SSDs in terms of IOPS is about 10-15x faster than the HDDs. A single SSD can at least churn 5,000 IOPS when compared to the fastest 15,000 RPM HDDs, which churns out about 200 IOPS (depending on HDD vendors). Therefore, the slowest SSDs can be 20-25x faster than the fastest HDDs, when measured in IOPS.

But the intent of this blogger is to share with you more about SSDs. There’s more to know because SSDs are not built the same. There are write-bias SSDs, read-bias SSDs; there are SLC (single level cell) and MLC (multi level cell) SSDs and so on. How do you differentiate them if Vendor A touts their SSDs and Vendor B touts their SSDs as well? You are not comparing SSDs and HDDs anymore. How do you know what questions to ask when they show you their performance statistics?

SNIA has recently released a set of methodology called “Solid State Storage (SSS) Performance Testing Specifications (PTS)” that helps customers evaluate and compare the SSD performance from a vendor-neutral perspective. There is also a whitepaper related to SSS PTS. This is something very important because we have to continue to educate the community about what is right and what is wrong.

In a recent webcast, the presenters from the SNIA SSS TWG (Technical Working Group) mentioned a few questions that I think we as vendors and customers should think about when working with an SSD sales pitch. I thought I share them with you.

- Was the performance testing done at the SSD device level or at the file system level?

- Was the SSD pre-conditioned before the testing? If so, how?

- Was the performance results taken at a steady state?

- How much data was written during the testing?

- Where was the data written to?

- What data pattern was tested?

- What was the test platform used to test the SSDs?

- What hardware or software package(s) used for the testing?

- Was the HBA bandwidth, queue depth and other parameters sufficient to test the SSDs?

- What type of NAND Flash was used?

- What is the target workload?

- What was the percentage weight of the mix of Reads and Writes?

- Are there warranty life design issue?

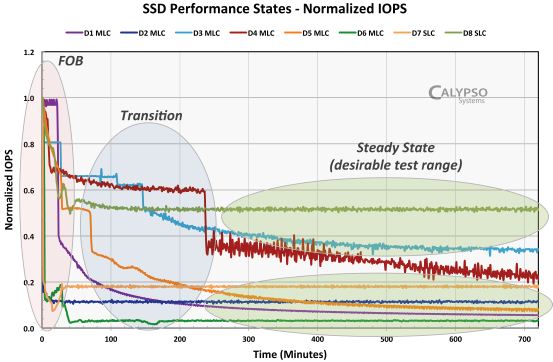

I thought that these questions were very relevant in understanding SSDs’ performance. And I also got to know that SSDs behave differently throughout the life stages of the device. From a performance point of view, there are 3 distinct performance life stages

- Fresh out of the box (FOB)

- Transition

- Steady State

As you can see from the graph below, a SSD, fresh out of the box (FOB) displayed considerable performance numbers. Over a period of time (the graph shown minutes), it transitioned into a mezzanine stage of lower IOPS and finally, it normalized to the state called the Steady State. The Steady State is the desirable test range that will give the most accurate type of IOPS numbers. Therefore, it is important that your storage vendor’s performance numbers should be taken during this life stage.

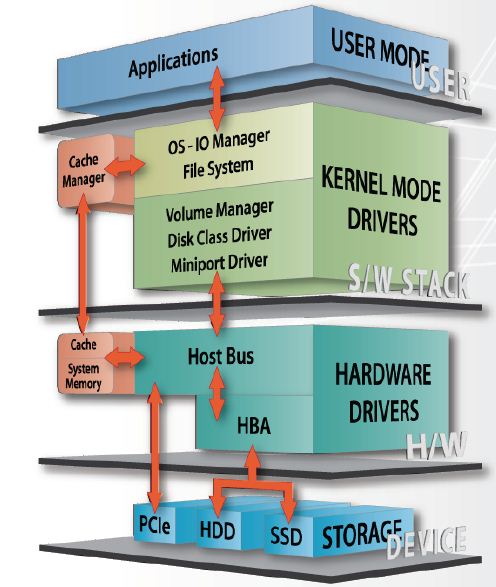

Another consideration when understanding the SSDs’ performance numbers are what type of tests used? The test could be done at the file system level or at the device level. As shown in the diagram below, the test numbers could be taken from many different elements through the stack of the data path.

Performance for cached data would given impressive numbers but it is not accurate. File system performance will not be useful because the data travels through different layers, masking the true performance capability of the SSDs. Therefore, SNIA’s performance is based on a synthetic device level test to achieve consistency and a more accurate IOPS numbers.

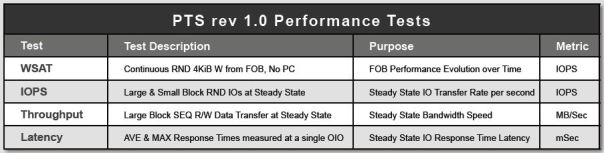

There are many other factors used to determine the most relevant performance numbers. The SNIA PTS test has 4 main test suite that addresses different aspects of the SSD’s performance. They are:

- Write Saturation test

- Latency test

- IOPS test

- Throughput test

Apple chomps Anobit

A few days ago, Apple paid USD$500 million to buy an Israeli startup, Anobit, a maker of flash storage technology.

Obviously, one of the reasons Apple did so is to move up a notch to differentiate itself from the competition and positions itself as a premier technology innovator. It has won the MP3 war with its iPod, but in the smartphones, tablets and notebooks space, Apple is being challenged strongly.

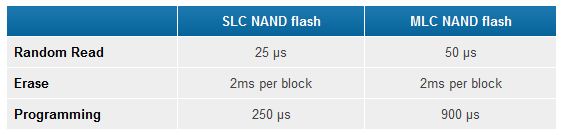

Today, flash storage technology is prevalent, and the demand to pack more capacity into a small real-estate of flash will eventually lead to reliability issues. The most common type of NAND flash storage is the MLC (multi-level cells) versus the more expensive type called SLC (single level cells).

But physically and the internal-build of MLC and SLC are the exactly the same, except that in SLC, one cell contains 1 bit of data. Obviously this means that 2 or more bits occupy one cell in MLC. That’s the only difference from a physical structure of NAND flash. However, if you can see from the diagram below, SLCs has advantages over MLCs.

NAND Flash uses electrical voltage to program a cell and it is always a challenge to store bits of data in a very, very small cell. If you apply too little voltage, the bit in the cell does not register and will result in something unreadable or an error. If you apply too much voltage, the adjacent cells are disturbed and resulting in errors in the flash. Voltage leak is not uncommon.

The demands of packing more and more data (i.e. more bits) into one cell geometry results in greater unreliability. Though the reliability of the NAND Flash storage is predictable, i.e. we would roughly know when it will fail, we will eventually reach a point where the reliability of MLCs will no longer be desirable if we continue the trend of packing more and more capacity.

That’s when Anobit comes in. Anobit has designed and implemented architectural changes of the way NAND Flash storage is used. The technology in laymen terms comes in 2 stages.

- Error reduction – by understanding what causes flash impairment. This could be cross-coupling, read disturbs, data retention impairments, program disturbs, endurance impairments

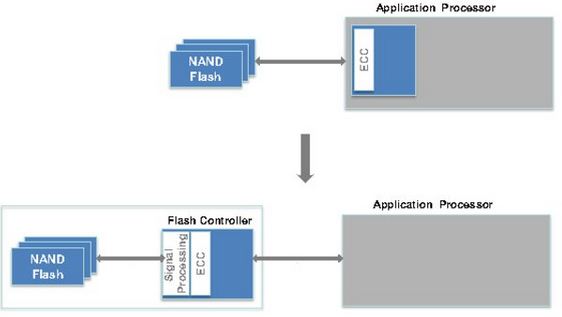

- Error Correction and Signal Processing – Advanced ECC (error-correcting code), and introducing the patented (and other patents pending) Memory Signal Processing (TM) to improve the reliability and performance of the NAND Flash storage as show in the diagram below:

In a nutshell, Anobit’s new and innovative approach will result in

- More reliable MLCs

- Better performing MLCs

- Cheaper NAND Flash technology

This will indeed extend the NAND Flash technology into greater innovation of flash storage technology in the near future. Whatever Apple will do with Anobit’s technology is anybody’s guess but one thing is certain. It’s going to propel Apple into newer heights.