Highroad to Parallel Road

Unless you are working with highly, parallelized access to files in a large scale-out NAS environment, you probably don’t get to work much with Parallel NFS (pNFS). pNFS is part of the NFSv4.1 (RFC 5661) standard, and was introduced in January 2010 to address NFS protocol in the clustered, scale-out NAS environment. It is to provide parallel file access across distributed servers.

pNFS is heavily driven by Panasas, NetApp, EMC, IBM, Sun (now Oracle) among others. And funnily enough, the company that sticks out from the bunch is one that used to tout block storage as the way to go, not files. That’s EMC, the company that more well known for its SAN solutions than its NAS (remember Celerra and IP4700?). And EMC has embraced pNFS in a big, big way. Read EMC’s CTO for Global Marketing, Chuck Hollis’ blog here and here.

However, unknown to many, a lot of the thinking that goes into pNFS are very similar to an EMC product some years ago. That product is EMC Highroad, which in the later years, renamed as Multi-path File System (MPFS).

Note: If you want to know more about the history of HighRoad/MPFS, read this blog.

The cornerstone of EMC MPFS is their File Mapping Protocol or FMP, which is a robust protocol that lines the mapping of files to their addressable blocks in storage. In a nutshell, when I was made responsible for this product during my time at EMC, I used to pitch to companies that MPFS was a file request is through NFS but respond to the requester can be in blocks (iSCSI or Fibre Channel). The beauty of this was, NFSv3 was chatty and heavy but the delivery of data through blocks via iSCSI or Fibre Channel has lesser overhead compared to NFSv3.

Hence the delivery is faster and EMC touted that the performance was 2-4x faster than NFS. Indeed, I have seen some lab tests results from EMC’s work with Schlumberger High Performance Lab in Houston, and the numbers were impressive. I still have them on Powerpoint somewhere.

In circa of 2003-2004, EMC donated the FMP code to IETF and as they say the rest was history.

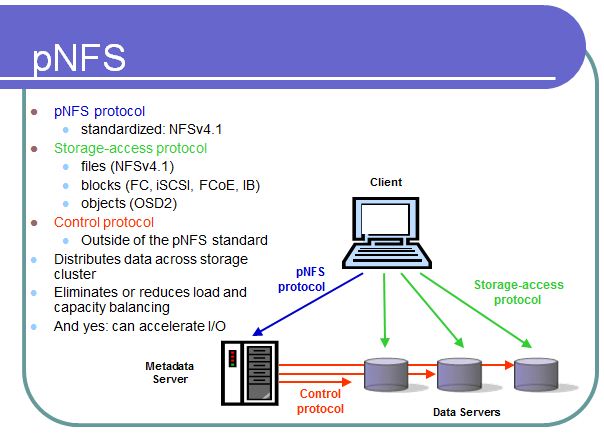

The picture below basically summarizes what pNFS is all about.

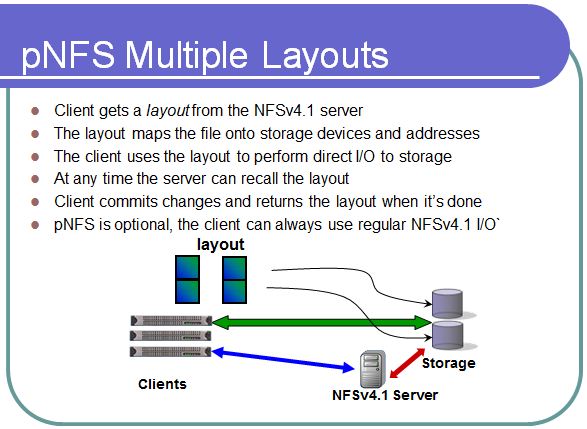

A NFSv4 client will basically check with a Metadata server via the pNFS protocol about the layout of the distributed cluster of server. The file, could be striped across multiple nodes of the cluster, and it is the metadata server that provides a map to the client to access the blocks or files from these nodes. This is exactly what the EMC HighRoad/MPFS File Mapping Protocol (FMP) did, mapping the file requests to its respective corresponding blocks. See diagram below:

And one of the powerful feature of pNFS is that it is not just about NFS. The green arrow you see in the above diagram is the storage-access protocol. That access protocol can be NFSv4.1, CIFS, iSCSI, Fibre Channel, FCoE, Infiniband, and Object Storage Device (OSD).

In order to have pNFS working, the NFS client must be NFSv4.1 ready and that code has been made available in Linux and OpenSolaris. Other Unix vendors, no doubt, will be coming out with their NFSv4.1 implementation soon. Oooooh, there will be a Windows NFSv4.1 client coming as well!

But I want to dispel the notion that EMC is a SAN company. EMC is a very strong NAS company and if you have seen the IDC market share (ok, ok, many of you out there will argue about it), EMC is #1 in NAS. And their contribution to pNFS is immense.

Posted on November 15, 2011, in EMC, NetApp, NFS and tagged EMC, Highroad, MPFS, NAS, NetApp, NFSv4.1, Panasas, parallel NFS, pNFS. Bookmark the permalink. Leave a comment.

Leave a comment

Comments 0